10 Things You Can Now Do With AI in 2026 That Were Impossible in 2024

Two years. That’s all it took.

In early 2024, AI felt like a really impressive autocomplete. You’d type something, it’d spit something back, and you’d spend 20 minutes editing it into shape. Useful? Sure. Revolutionary? Not quite.

Fast forward to 2026, and the conversation has completely changed. Not because of hype — hype has been here since 2022. But because AI actually does things now. It writes code and runs it. It generates video with synchronized audio from a text prompt. It operates your computer while you’re making coffee. It sits inside research labs and proposes experiments that scientists then actually run.

This isn’t a “wow, the future is here” puff piece. It’s a clear-eyed look at 10 specific things that were either technically impossible, prohibitively expensive, or so bad they were unusable in 2024 — and that work right now, in 2026, at a price regular people and businesses can actually afford.

Some of them will surprise you. Some of them should probably concern you a little. All of them are real.

1. Generate Cinematic Video With Synchronized Audio From a Text Prompt

Then (2024): OpenAI teased Sora to a thunderstruck audience in February 2024. The demos were jaw-dropping. The reality: it wasn’t publicly available, clips maxed out at around 60 seconds, and audio was completely absent. The actual Sora that shipped in late 2024 was considerably less impressive than the preview — and by April 2026, it was shut down entirely after burning through $15 million per day in compute with barely $2 million in total lifetime revenue.

Now (2026): The field has matured into something genuinely useful. Google’s Veo 3.1, Runway Gen-4.5, Kling 3.0, and Seedance 2.0 (from ByteDance) all produce videos with native, synchronized audio — sound effects, ambient noise, and in some cases dialogue — generated alongside the visuals in a single pass. Resolution has jumped from 720p experiments to native 4K. Clip lengths now reach 2 minutes. Pricing per 10-second clip has dropped 65% compared to 2024 rates.

The honest caveat: long-form content and complex multi-character scenes still break. Physics can still do weird things. But for marketing content, social media, concept visualization, and product demos? AI video is a legitimate production tool today — not a parlor trick.

Canva AI vs Adobe Express vs Figma: Best Design Tool for Non-Designers in 2026?

Why Every Gadget Looks the Same in 2025 — and Why That’s a Problem

2. Let AI Write, Run, and Fix Its Own Code Autonomously

Then (2024): GitHub Copilot and ChatGPT could suggest code snippets. You still had to understand what they were suggesting, copy it over, debug it yourself, and keep the AI on a very short leash. Autonomous coding — where the AI plans, executes, tests, and iterates without you holding its hand at every step — was a research concept, not a product.

Now (2026): Claude Code is probably the clearest example of what changed. Anthropic’s own engineers report that the vast majority of new Claude model code is now written by Claude itself. The tool reads your entire codebase, plans changes across multiple files, runs tests, reads the error messages, fixes the bugs, and loops until the test suite passes — all without prompting each step individually.

Stripe deployed Claude Code across 1,370 engineers. One team completed a 10,000-line Scala-to-Java migration in four days — work estimated at ten engineer-weeks. That’s not a benchmark number. That’s a documented production outcome.

GPT-5.4 from OpenAI hit 75% on OSWorld-V — which simulates real desktop productivity tasks — slightly above the human baseline of 72.4%. Two years ago, these systems could reliably handle maybe a two-minute task. Now they’re completing complex software engineering jobs that take human experts five hours.

Check it: Claude Code Interpreter

3. Control a Computer With AI: Click, Type, Navigate, Actually Do Stuff

Then (2024): Anthropic previewed “Computer Use” in late 2024 as a research beta. It was slow, unreliable, and mostly a proof of concept. The demos were impressive in the same way that early voice assistants were impressive — right up until you tried to use them for anything real.

Now (2026): GPT-5.4 is the first model to exceed human-level performance on the OSWorld benchmark for computer control tasks. Claude Code’s Computer Use feature lets it click buttons, type text, take screenshots, and operate desktop applications as part of autonomous workflows. You can schedule it to run nightly, log results, and handle exceptions on its own.

This is genuinely new territory. An AI that can operate software it’s never been explicitly trained on, navigate GUIs, and complete multi-step desktop tasks without human step-by-step guidance — that didn’t exist as a usable product in 2024. Now it’s a checkbox in a developer settings menu.

Check it: Anthropic Computer Use Documentation

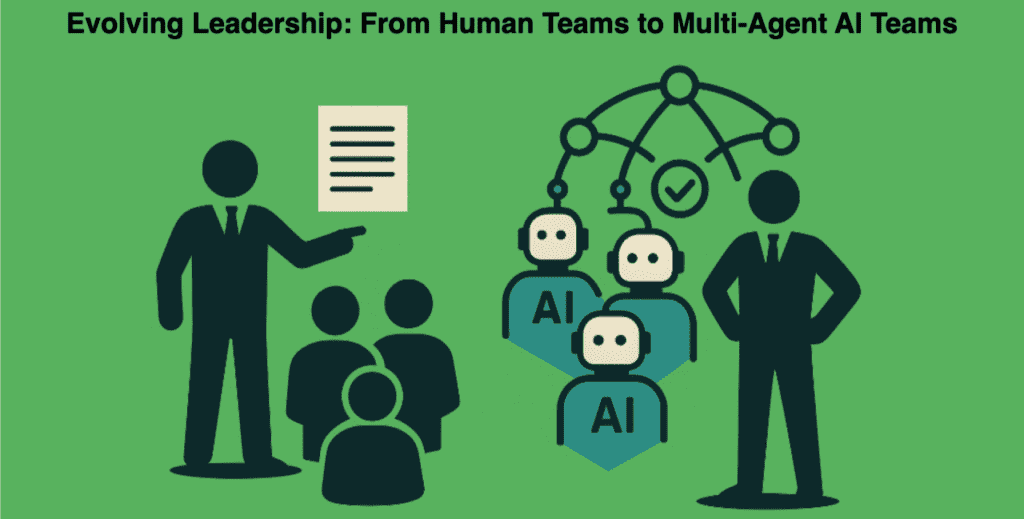

4. Run Multi-Agent AI Teams That Work in Parallel

Then (2024): AI agents were single-threaded. You talked to one model. It answered. You talked again. The concept of multiple AI agents collaborating — one handling research, one writing code, one reviewing it, all coordinating — was purely experimental. Tools like AutoGPT gave people a taste, but the results were notoriously unreliable and funny-for-the-wrong-reasons.

Now (2026): Claude Code’s Agent Teams feature lets developers spawn parallel Claude instances that actually coordinate. One agent handles the frontend, another the backend, another writes tests. They share task lists and communicate results back to an orchestrator. Gartner projects that 40% of enterprise applications will use task-specific AI agents by 2026 — compared to less than 5% in 2025.

IBM’s analysts describe this as the rise of “super agents” — systems that can plan, call tools, and complete complex tasks across multiple environments (your browser, your editor, your inbox) without you managing them individually. The infrastructure to actually do this didn’t exist at a commercial scale in 2024. It does now.

5. Get AI as an Active Participant in Scientific Discovery

Then (2024): AI could summarize papers, answer questions about chemistry, and help researchers write grant applications. Genuinely useful, genuinely limited. The model was a search engine with better prose, not a research collaborator.

Now (2026): The shift is from “AI that reads science” to “AI that does science.” Google DeepMind’s AlphaEvolve — which combines Gemini with an evolutionary algorithm that verifies and improves its own suggestions — is discovering new algorithms for previously unsolved problems. It’s already found more efficient ways to manage power consumption in Google’s data centers and TPU chips.

Microsoft’s Peter Lee describes 2026 as the year AI stops summarizing papers and starts generating hypotheses, running experiments via tool access, and collaborating with human researchers as a genuine lab assistant. Several AI-discovered drug candidates are now in mid-to-late-stage clinical trials. These aren’t computational predictions — they’re compounds that went through the full development pipeline and are being tested in humans.

That trajectory didn’t exist in 2024. It’s happening now.

Check it: DeepMind AlphaFold Database

6. Get Real-Time, Context-Aware AI Shopping That Actually Buys Things

Then (2024): You could ask ChatGPT to recommend a laptop. It would give you a list. You’d click away, check prices yourself, compare specs yourself, and buy it yourself. The AI was a suggestion engine with a knowledge cutoff, not a shopping agent.

Now (2026): AI shopping agents can browse live inventory, compare real-time pricing across retailers, analyze product reviews, factor in your specific constraints and preferences, and — critically — complete the purchase. Salesforce projected AI-driven transactions in the billions during the 2025 holiday season. MIT Technology Review describes it as having a personal shopper available 24/7, one that can find the best deal, handle the comparison research, and take care of the purchasing and delivery details.

The 2024 version could suggest. The 2026 version can execute. That’s a categorically different capability.

7. Use Open-Source AI That Actually Competes With the Frontier Labs

Then (2024): Open-source models existed, but “open-source AI” mostly meant “significantly worse AI that you can run locally.” Llama 2 was impressive for what it was. It was not competitive with GPT-4 or Claude on anything difficult. The gap between open and closed models was large enough that for serious work, open-source was a cost-cutting compromise, not a real alternative.

Now (2026): DeepSeek R1 in January 2025 was the inflection point — a Chinese open-weight reasoning model that shocked the industry by matching top-tier performance at a fraction of the cost. “DeepSeek moment” became a phrase. The open-source ecosystem in 2026 includes models like DeepSeek V4, IBM’s Granite, Wan 2.6 for video, and LTX-2 for local video generation — all competitive with proprietary options for a wide range of tasks.

The model runs on your own hardware. You can fine-tune it on your own data. It costs cents per query instead of dollars. For 80% of professional use cases, the quality gap has closed to the point where the open-source choice is rational, not just ideological.

Check it: Hugging Face Models Hub

8. Generate Images That Require Zero Photoshop Cleanup

Then (2024): Midjourney V6, DALL-E 3, and Stable Diffusion were producing genuinely impressive results. They were also producing hands with six fingers, text that looked like someone had a stroke, and backgrounds that dissolved into abstract art if you looked too closely. Every output required a post-processing pass, sometimes substantial.

Now (2026): The artifact and consistency problems haven’t completely disappeared — they never will at the edge cases — but for the vast majority of professional outputs, AI image generation in 2026 produces usable commercial-quality work on the first or second attempt. Text in images renders correctly. Hands are hands. Product shots hold their shape across multiple generations. Kling, trained specifically on photorealistic human generation, produces results that genuinely require a close look to distinguish from photography in many scenarios.

The 2024 tools were impressive but required a skilled operator and significant cleanup time. The 2026 tools have raised the floor dramatically. Non-designers are shipping marketing assets without touching Photoshop at all.

9. Talk to AI Models Running Locally on Consumer Hardware

Then (2024): Running a meaningful AI model locally meant either using tiny, heavily quantized models that felt like downgraded chatbots, or owning server-grade hardware. The idea of a powerful reasoning model running on a consumer laptop with good results was not on the table.

Now (2026): The efficiency push has been aggressive. AMD’s Ryzen AI 400 series for laptops includes Neural Processing Units designed to accelerate local AI tasks. Intel and Apple Silicon have similar dedicated AI hardware. Small, domain-specific models fine-tuned through reinforcement learning now achieve results that, on specific tasks, match or exceed much larger models. Edge AI has moved from a marketing term to an actual capability.

For privacy-sensitive work — legal documents, medical records, proprietary business data — local AI inference is now a practical option, not a trade-off. The compute cost of running meaningful AI dropped so dramatically between 2024 and 2026 that the economics of local deployment shifted entirely.

Check it: Microsoft Local AI Guide

10. Use AI That Remembers You Across Conversations

Then (2024): Every conversation with an AI started from zero. You’d explain your project again. Your name again. Your preferences again. The context you’d built up over weeks of interactions evaporated the moment you closed the browser tab. It was like working with a brilliant contractor who got amnesia every morning.

Now (2026): Memory systems are standard across major AI platforms. Claude maintains memory across conversations, learning coding style, project structure, and user preferences automatically — without explicit configuration. Claude Code’s AutoMemory tracks how you write code and applies your patterns in new sessions. OpenAI’s memory in ChatGPT remembers names, preferences, ongoing projects, and stated goals.

This changes the nature of working with AI from “tool you operate” to something closer to “collaborator you work with over time.” The difference in productivity and usability is substantial, and it’s one of the least-discussed but most genuinely transformative changes between 2024 and 2026.

Check it: OpenAI ChatGPT Memory Features

Final Thoughts

Here’s the honest summary: 2024 AI was impressive. 2026 AI is useful in ways that change how work happens.

The shift isn’t in any single capability — it’s in the combination. An AI that can remember your context, operate your computer, run multi-agent workflows, generate video with audio, write and deploy code autonomously, and participate in scientific research represents something categorically different from what existed two years ago.

None of this is magic, and none of it is without limits. Long-form video still breaks. Agents still make mistakes in complex multi-step tasks. Open-source quality still trails the frontier on the hardest problems. The systems are impressive, not infallible.

But the trajectory is the point. The rate at which AI hardware costs have dropped, model capabilities have improved, and production-quality tools have shipped is faster than almost anyone predicted — including the people building them.

If 2024 was the year AI convinced people it could do things, 2026 is the year it started actually doing them. The question for 2028 is one nobody has a confident answer to yet.